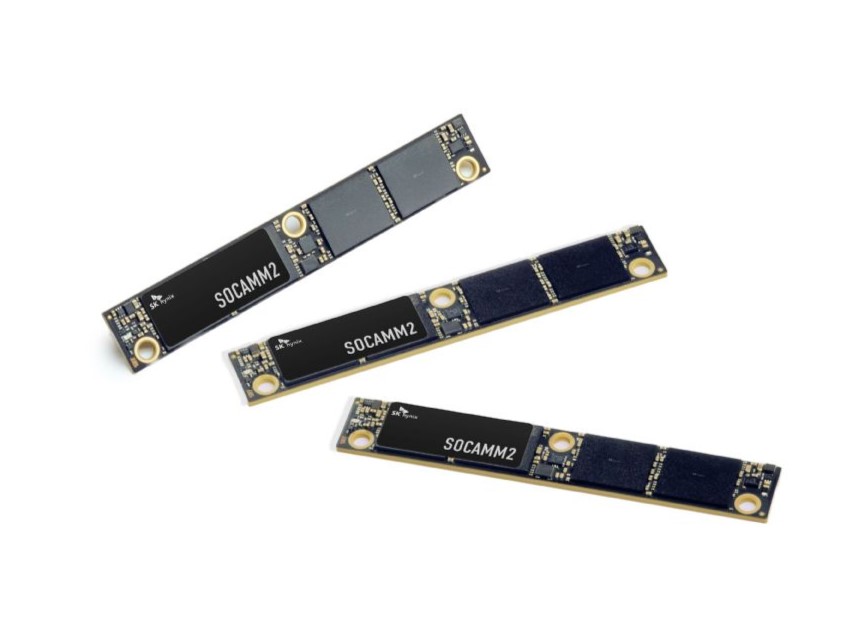

SK Hynix Begins Mass Production of 192GB SOCAMM2

On April 20, 2026, SK Hynix announced the formal commencement of mass production for its 192GB SOCAMM2 product, built upon sixth-generation 1c LPDDR5X low-power DRAM. This marks the industry's first SOCAMM2 module employing the advanced 1c process node, signifying a substantive breakthrough in the optimized migration of mobile low-power memory into server environments. The product is expected to achieve scaled deployment as early as the second half of this year, in conjunction with NVIDIA's next-generation platform.

NVIDIA Vera Rubin Platform Integration

The SOCAMM2 module entering mass production has been purpose-built for NVIDIA's next-generation AI platform, "Vera Rubin." Within this architecture, SOCAMM2 is deployed on the Vera CPU side, complementing the HBM situated on the GPU side to establish a three-tier memory hierarchy encompassing "high-bandwidth HBM, low-power SOCAMM, and standard DDR5." This stratified architecture effectively mitigates memory bottlenecks encountered during the training and inference of ultra-large-scale models, enhancing overall system processing speed.

Performance Leap

Compared to conventional server RDIMM modules, the 192GB SOCAMM2 achieves two core breakthroughs: bandwidth more than doubles, while power consumption is reduced by over 75%. Its data transfer rate has been elevated from the previous-generation SOCAMM1's 8.5 Gbps to 9.6 Gbps. Additionally, the adoption of a compression connector design strengthens signal integrity while enabling modular replacement, significantly optimizing server operational and maintenance efficiency. The introduction of the LPDDR architecture delivers tangible savings in data center power and cooling costs.

Compared to conventional server RDIMM modules, the 192GB SOCAMM2 achieves two core breakthroughs: bandwidth more than doubles, while power consumption is reduced by over 75%. Its data transfer rate has been elevated from the previous-generation SOCAMM1's 8.5 Gbps to 9.6 Gbps. Additionally, the adoption of a compression connector design strengthens signal integrity while enabling modular replacement, significantly optimizing server operational and maintenance efficiency. The introduction of the LPDDR architecture delivers tangible savings in data center power and cooling costs.

Market Positioning

As the focus of AI development shifts from training to inference, SOCAMM2—which supports the low-power operation of large language models—is emerging as a critical option for next-generation memory solutions. SK Hynix has proactively established a stable mass-production system to meet the volume requirements of global Cloud Service Providers (CSPs). Justin Kim, Head of AI Infrastructure, stated that through the supply of 192GB SOCAMM2, SK Hynix has established a new standard for AI memory performance, reinforcing its position as the most trusted memory solutions provider for global AI customers.

Competitive Landscape

Micron had already begun shipping 256GB SOCAMM2 samples in March, leading by 33% in capacity. Samsung, meanwhile, has addressed module warpage challenges through its proprietary low-temperature solder technology and has also achieved mass production of 192GB products. With this move, SK Hynix enters the fray leveraging the power-efficiency advantages of its 1c process. All three suppliers are expected to showcase their latest products during NVIDIA GTC 2026. It is estimated that NVIDIA's SOCAMM2 demand alone for 2026 will reach tens of billions of Gb, creating substantial incremental value for the supply chain.

CONEVO ICs Independent Distributor

CONEVO is a global independent distributor specializing in high-performance semiconductors, especially FPGA, MCU, DSP, data converter and MLCC. CONEVO Featured IC Recommendations:

● CLVC1G125QDBVRQ1: A vehicle-grade single-channel bus buffer gate chip, featuring tri-state output and operating within a 1.65V - 3.6V wide voltage range.

● ADV7123KSTZ140: ADI high-speed triple 8-bit video DAC chip, integrating 140MHz conversion rate and TTL-compatible input.

● GD25Q64ESIGR: Megic 64Mb SPI NOR Flash memory, supporting 4KB sector erasure and maximum 133MHz clock frequency.

Website: www.conevoelec.com

Email: info@conevoelec.com